Generative AI is having a moment. If you have asked a curious question into the digital ether, whether you are plugged into tech, a business owner, a student, or just someone navigating the worldwide web, you have probably encountered generative AI tools such as OpenAI’s ChatGPT, Google’s Gemini, Meta’s LLaMA, or Microsoft’s Copilot. These systems can write essays, create images, write emails, help with coding, and even write legal documents. The enthusiasm around these services is dizzying—imagining infinite creativity and productivity, as well as having every bit of human knowledge at your fingertips.

However, amidst the digital gold rush, cracks are starting to appear. These tools, often remarkable, still cannot be trusted. They hallucinate facts, misunderstand questions, misinterpret context, occasionally deliver answers that are completely incorrect, and sometimes, even downright dangerous. Additionally, as more websites, applications, and platforms begin to rely on generative AI for everyday features, it feels like we are slowly staging the entire internet into beta again. We’ve entered a wild west of unpredictability and experimentation (not everything works as we think it should).

What Exactly Are the Reliability Issues?

To identify the source of the problems, we have to understand a little about how generative AI operates. These models are trained on extensive databases, essentially the public stretch of the entire internet, through something called ‘unsupervised learning,’ with the aim of predicting the next word in a sequence. That’s it. There is no real understanding, logic, or knowledge of facts behind their answers.

This means even the best of systems can produce errors such as:

Hallucinations: Confidently stating something as fact when it is false.

Bias and offensive material: Reflecting harmful stereotypes contained in training data.

Inconsistency: Providing different answers to the same question based on how the question is posed.

Context fade: Losing track of long conversations and understanding of subtle changes in context.

Overconfidence: Presenting guesses in an authoritative tone, which leads users to trust incorrect information.

In the case of a user asking a chatbot for legal advice, they may receive fabricated case law. A student using AI for historical facts could be misled by fictitious quotes (i.e., the user takes the output as fact). Even a technologically savvy user may fall victim to errors if they do not fact-check the outcomes.

Real-World Examples of AI Misfires

The news just keeps rolling:

Google’s AI Overviews, which were supposed to enhance search, suggested that users eat rocks and put glue in their pizza sauce, were predicated on misunderstood or satirical sources.

Air Canada’s chatbot advertised a non-existent refund policy, and the company was forced to abide by it when challenged in court.

A New York lawyer had ChatGPT draft a legal brief that cited total fabrication of court cases, which eventually made it to a hearing, and the judge sanctioned him, and the story went viral.

Bing’s chatbot (early version) was reported to be aggressive or emotionally manipulating users in long conversations.

These are not just bugs; these are symptoms of a substantial reliability problem in the generative AI architecture.

Why Is This Happening?

Generative AI is founded on the notion that it doesn’t “know” anything. It neither checks facts, discovers truths, consults other sources, nor even questions its outputs. It simply generates output based on mathematical data patterns. This causes a few critical issues:

1. No Ground Truth

AI systems don’t “know” what a fact is. They only generate plausible text outputs, not facts. Even if training data was rigid facts, it could erase that information, or cross data facts together, especially if the user inputs a narrow, specialty, or complex request/input.

2. Training Data Has Errors

If you give an AI a set of training data from the internet, it includes all of the errors, biases, and nonsensical knowledge. Satire, misinformation, tiny errors, etc., are all equal verbal inputs.

3. Models Don’t Know Anything About Current Knowledge

Most models won’t provide feedback on current knowledge after their training, and therefore don’t know what is currently happening in the world. Some like ChatGPT even augment knowledge with a live search, but most do not. Most likely, if the AI’s output left knowledge before it collected knowledge, then basic current event questions can turn badly.

4. Models Have No Accountability

An AI system will not say, “I’m wrong” unless you make it. The system will not tell you, “I’m guessing.” The next output will always be a flat, confident, polished output, which is potentially dangerous and misleading.

Can Reliability Be Improved?

Yes—but it will take more than simply data and computing power. This is what companies and researchers are doing:

1. RAG (Retrieval-Augmented Generation)

Rather than relying solely on the AI’s knowledge from its training database, RAG systems create systems that go out to external databases or the web to retrieve information in real time before generating the answer based on the previous relevant information. This can help to eliminate some hallucinations and give a level of confidence around facts.

2. Model Alignment and Guardrails

Many companies such as OpenAI, Anthropic, and Google are putting massive resources into making AI outputs safer and more reliable by applying alignment approaches, reinforcement learning from human feedback (RLHF), and built-in moderation systems.

3. Domain-Specific Models

General all-purpose AI may never be fully competent across entire domains. However, focused AIs trained on specific fields such as law, medicine, or engineering can deliver output with much higher reliability.

4. Fact-Checking Layers

Some startups and research organizations are developing AI layers that double-check the output of another model—think an “AI proofreader” that seeks to validate claims, citations, and logical soundness.

What Can Users Do Right Now?

Users must be cautious and skeptical when using generative tools, such as AI, until AI becomes fully reliable.

Here are some best practices:

Always validate AI-generated content, especially in sensitive situations (e.g., health care, finance, or law).

Ask follow-up questions to clarify the AI’s reasoning or solicit its citations.

Work with trusted platforms that offer transparency, disclaimers, or access to source links.

Think of AI as a collaborator, not an authority. AI is an effective tool, but it is not an expert replacement.

Why This Affects the Whole Internet

Generative AI is rapidly becoming the infrastructure of digital experiences—be it in search engines or help desks, creative tools or education platforms. Companies are hurrying to integrate AI capabilities, often the model is often not production-ready when it is deployed.

This creates a paradox; the more we lean into AI, the more we expose our user/users to its shortcomings. And if these issues are never addressed, it can lead to:

A decrease in public trust in digital platforms.

Misinformation at scale.

Legal liabilities and regulatory push-back.

Furthering the knowledge gap for the less-savvy user who assumes that whatever is generated is always accurate.

Conclusion

Generative AI is not broken; it’s simply not fully baked. The tech sector is still figuring out how to augment generative models in ways that are trustworthy, transparent, and safe. These are necessary growing pains in what is potentially one of the most significant technological shifts of modern times. It is time for users, creators, and organizations to come to terms with the fact that it is not a mature technology yet. The shine of AI-generated content glosses over the brittleness behind the curtain.

Until generative AI systems can reliably distinguish fact from fiction, we’re all in a beta version of the future—and it’s on all of us to proceed cautiously, ask questions, and demand better.

Patent Landscape and Graphical Exploration

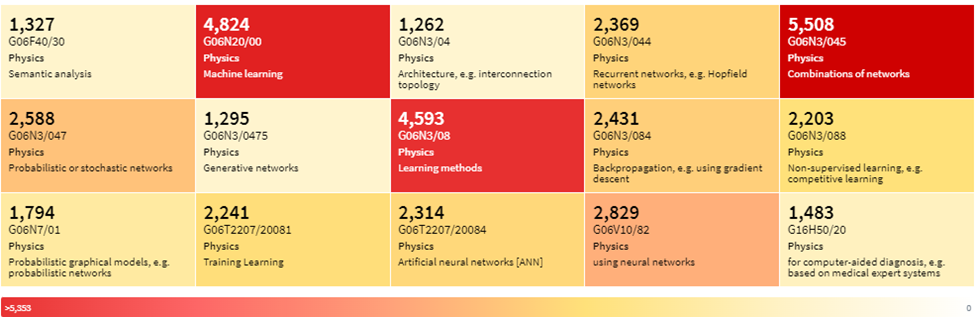

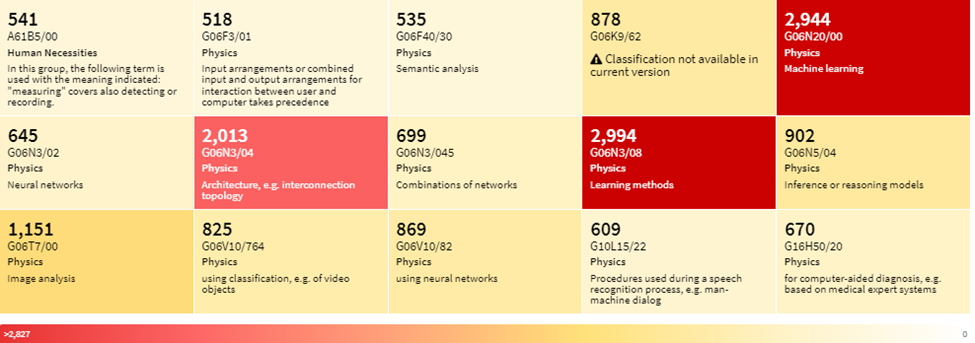

Top CPC classification codes

Top IPCR classification codes

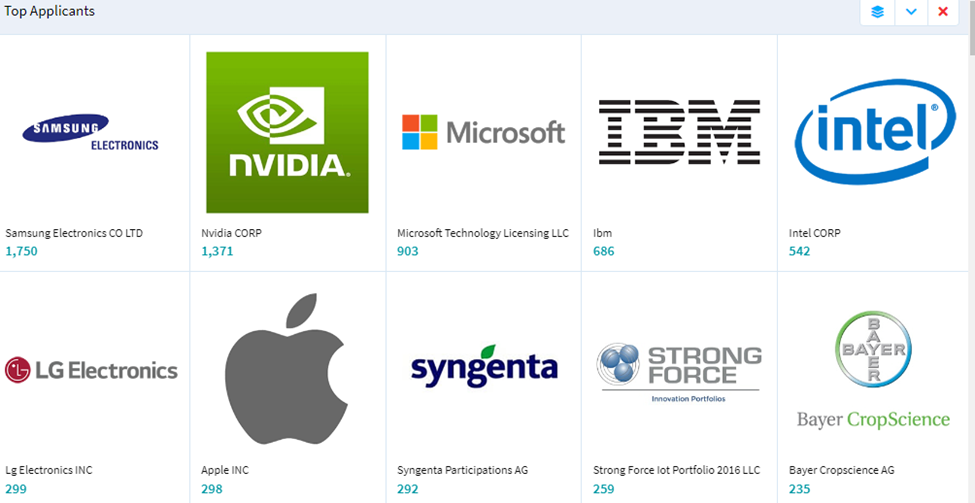

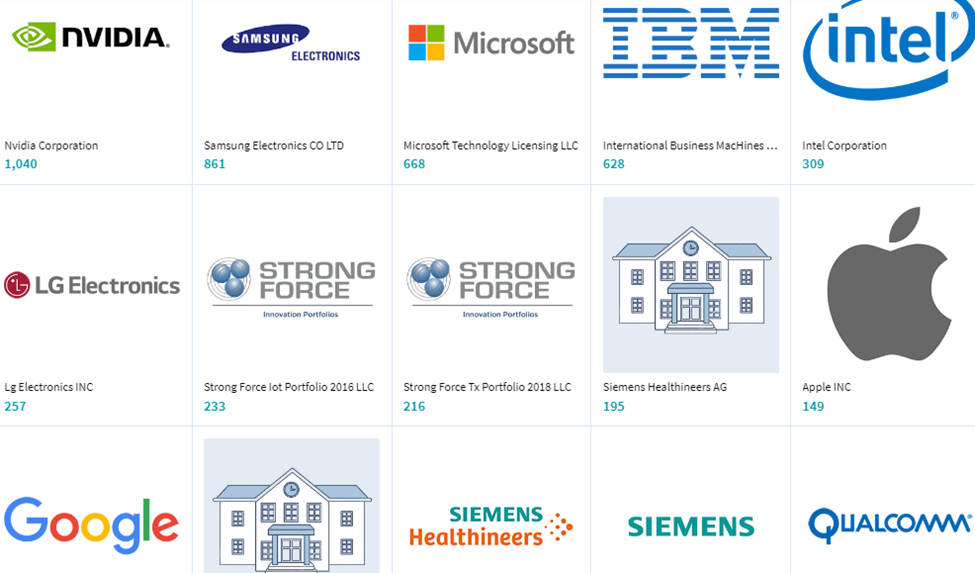

Top Owners

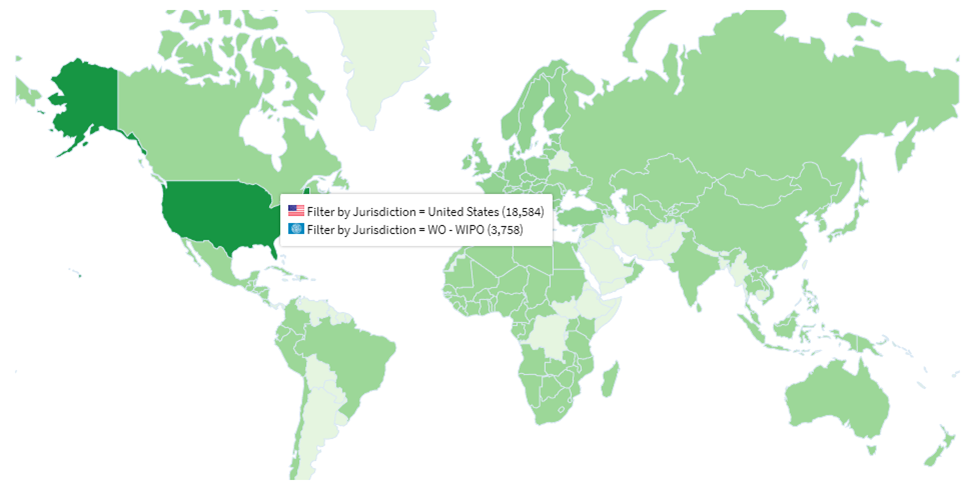

Patent documents by jurisdiction

(Source: lens.org)

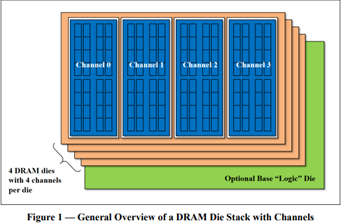

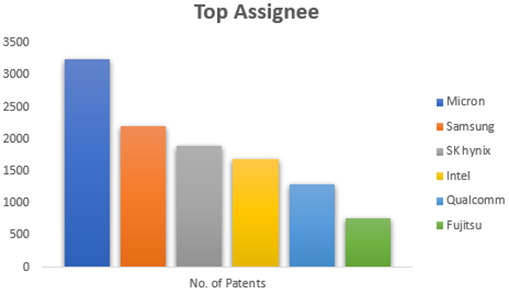

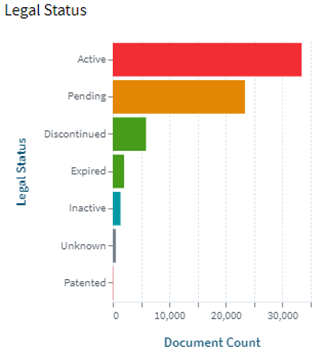

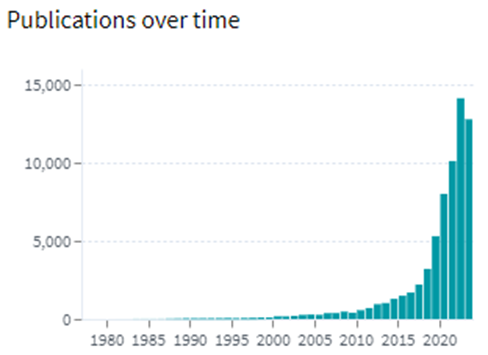

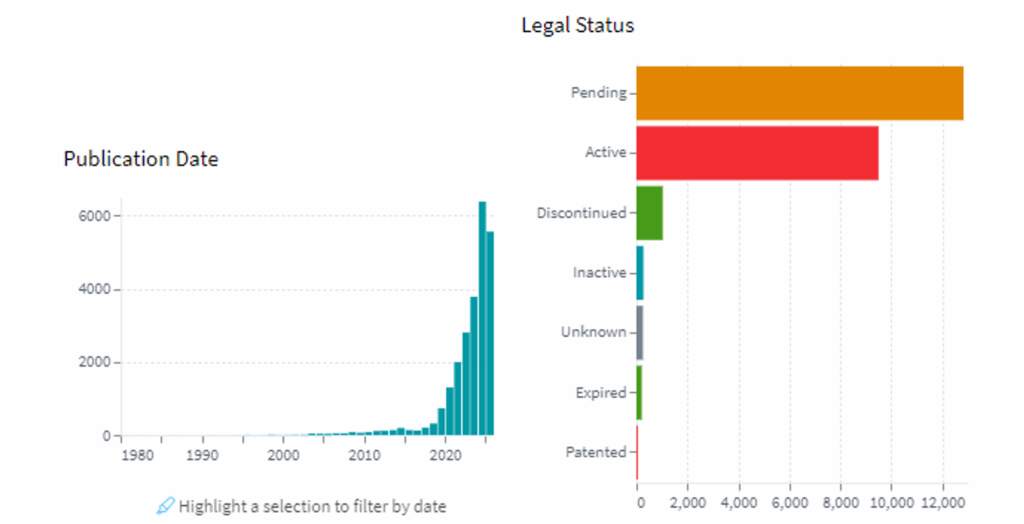

![High Bandwidth Memory 3 (HBM3): Overcoming Memory Bottlenecks in AI Accelerators With the rise of generative AI models that can produce original text, picture, video, and audio material, artificial intelligence (AI) has made major strides in recent years. These models, like large language models (LLMs), were trained on enormous quantities of data and need a lot of processing power to function properly. However, because of their high cost and processing requirements, AI accelerators now require more effective memory solutions. High Bandwidth Memory, a memory standard that has various benefits over earlier memory technologies, is one such approach. How HBM is relevant to AI accelerators? Constant memory constraints have grown problematic in a number of fields over the past few decades, including embedded technology, artificial intelligence, and the quick growth of generative AI. Since external memory interfaces have such a high demand for bandwidth, several programs have had trouble keeping up. An ASIC (application-specific integrated circuit) often connects with external memory, frequently DDR memory, through a printed circuit board with constrained interface capabilities. The interface with four channels only offers about 60 MB/s of bandwidth even with DDR4 memory. While DDR5 memory has improved in this area, the improvement in bandwidth is still just marginal and cannot keep up with the continuously expanding application needs. However, a shorter link, more channels, and higher memory bandwidth become practical when we take the possibility of high memory bandwidth solutions into account. This makes it possible to have more stacks on each PCB, which would greatly enhance bandwidth. Significant advancements in high memory bandwidth have been made to suit the demands of many applications, notably those demanding complex AI and machine learning models. Latest generation of High Bandwidth Memory The most recent high bandwidth memory standard is HBM3, which is a memory specification for 3D stacked SDRAM that was made available by JEDEC in January 2022. With support for greater densities, faster operation, more banks, enhanced reliability, availability, and serviceability (RAS) features, a lower power interface, and a redesigned clocking architecture, it provides substantial advancements over the previous HBM2E standard (JESD235D). [Source: HBM3 Standard [JEDEC JESD238A] Page 16 of 270] P.S. You can refer to HBM3 Standard [JEDEC JESD238A]: https://www.jedec.org/sites/default/files/docs/JESD238A.pdf for further studies. How does HBM3 address memory bottlenecks in AI accelerators? HBM3 is intended to offer great bandwidth while consuming little energy, making it perfect for AI tasks that need quick and effective data access. HBM3 has a number of significant enhancements over earlier memory standards, including: Increased bandwidth Since HBM3 has a substantially larger bandwidth than its forerunners, data may be sent between the memory and the GPU or CPU more quickly. For AI tasks that require processing massive volumes of data in real-time, this additional bandwidth is essential. Lower power consumption Since HBM3 is intended to be more power-efficient than earlier memory technologies, it will enable AI accelerators to use less energy overall. This is crucial because it may result in considerable cost savings and environmental advantages for data centers that host large-scale AI hardware. Higher memory capacity Greater memory capacities supported by HBM3 enable AI accelerators to store and analyze more data concurrently. This is crucial for difficult AI jobs that need access to a lot of data, such as computer vision or natural language processing. Improved thermal performance AI accelerators are less likely to overheat because to elements in the architecture of HBM3 that aid in heat dissipation. Particularly during demanding AI workloads, this is essential for preserving the system's performance and dependability. Compatibility with existing systems Manufacturers of AI accelerators will find it simpler to implement the new technology because HBM3 is designed to be backward-compatible with earlier HBM iterations without making substantial changes to their current systems. This guarantees an easy switch to HBM3 and makes it possible for quicker integration into the AI ecosystem. In a word, HBM3 offers enhanced bandwidth, reduced power consumption, better memory capacity, improved thermal performance, and compatibility with current systems, making it a suitable memory choice for AI accelerators. HBM3 will play a significant role in overcoming memory constraints and allowing more effective and potent AI systems as AI workloads continue to increase in complexity and size. Intellectual property trends for HBM3 in AI Accelerators HBM3 in AI Accelerators is witnessing rapid growth in patent filing trends across the globe. Over the past few years, the number of patent applications almost getting doubled every two years. MICRON is a dominant player in the market with 50% patents. It now holds twice as many patents as Samsung and SK Hynix combined. Performance, capacity, and power efficiency in today's AI data centers are three areas where Micron's HBM3 Gen2 "breaks new records." It is obvious that the goal is to enable faster infrastructure utilization for AI inference, lower training periods for big language models like GPT-4, and better total cost of ownership (TCO). Other key players who have filed for patents in High bandwidth memory technology with are Intel, Qualcomm, Fujitsu etc. [Source: https://www.lens.org/lens/search/patent/list?q=stacked%20memory%20%2B%20artificial%20intelligence] Following are the trends of publication and their legal status over time: [Source: https://www.lens.org/lens/search/patent/list?q=stacked%20memory%20%2B%20artificial%20intelligence] These Top companies own around 60% of total patents related to UFS. The below diagram shows these companies have built strong IPMoats in US jurisdiction. [Source: https://www.lens.org/lens/search/patent/list?q=stacked%20memory%20%2B%20artificial%20intelligence] Conclusion In summary, compared to earlier memory standards, HBM3 provides larger storage capacity, better bandwidth, reduced power consumption, and improved signal integrity. HBM3 is essential for overcoming memory limitations in the context of AI accelerators and allowing more effective and high-performance AI applications. HBM3 will probably become a typical component in the next AI accelerator designs as the need for AI and ML continues to rise, spurring even more improvements in AI technology. Meta Data The performance of AI accelerators will be improved by the cutting-edge memory technology HBM3, which provides unparalleled data speed and efficiency.](https://intellect-partners.com/wp-content/uploads/2023/12/Blog-Cover-2.png)