Gaming thrives on creativity—until patents come into play. While it’s natural for developers to protect their innovations, some patents have had a massive impact on game design. Imagine if the first open-world action RPG had been patented—games like The Witcher, Elden Ring, or Skyrim might never have taken the genre to new heights!

While most core gameplay elements remain unpatented, a few game-changing mechanics have been legally locked away. The question is: do these patents protect creativity or hinder it? Let’s take a look at some of the most famous (and infamous) patented game mechanics.

1. The Nemesis System – An Army of Personalized Enemies

Patent Owner: Warner Bros.

Patent Expires: 2036

Middle-earth: Shadow of Mordor introduced the Nemesis System, which turned ordinary enemies into dynamic rivals who remember past encounters. It added an entirely new layer of depth to the game, making each playthrough unique.

Unfortunately, Warner Bros. patented this system, preventing other developers from creating similar mechanics. As a result, it has only been used in one sequel, Middle-earth: Shadow of War—a real waste of an incredible idea.

2. Loading Screen Minigames – Fun While You Wait

Patent Owner: Namco

Patent Expired: 2015

Before SSDs made load times nearly nonexistent, loading screen minigames were a great way to keep players entertained while a game loaded in the background. Namco patented this mechanic, forcing other developers to leave players staring at dull loading bars.

The patent expired in 2015, so developers are free to bring back this beloved feature. The irony? Load times are now so fast that minigames might not even be necessary anymore.

3. Ping System – Communication Without Words

Patent Owner: EA

Patent Expires: 2039

Apex Legends’ ping system revolutionized team communication by allowing players to highlight enemies, items, and locations without voice chat. While EA owns the patent, they’ve generously allowed other games to implement similar mechanics—so far.

It’s a rare case where a patent actually benefits the industry, ensuring that intuitive communication remains available to all developers rather than being locked away.

4. Dialogue Wheel – Talking in Circles (Literally)

Patent Owner: EA

Patent Expires: 2029

Games like Mass Effect popularized the dialogue wheel, an intuitive way to present conversation options in a circular interface. The system makes interactions smoother by giving players a quick idea of the tone of their response.

Thankfully, the patent doesn’t prevent all forms of multiple-choice dialogue, meaning games like The Witcher 3 and Cyberpunk 2077 could still implement their own unique conversation mechanics.

5. Floating Directional Arrows – Crazy Taxi’s Legacy

Patent Owner: Sega

Patent Expired: 2018

Before open-world GPS systems, Crazy Taxi introduced a floating arrow that guided players to their destination. Sega quickly patented it, leading to lawsuits against games like The Simpsons: Road Rage, which used a nearly identical feature.

Since the patent expired in 2018, we’ve seen navigation systems evolve into more immersive tools, but Crazy Taxi’s floating arrow remains a nostalgic classic.

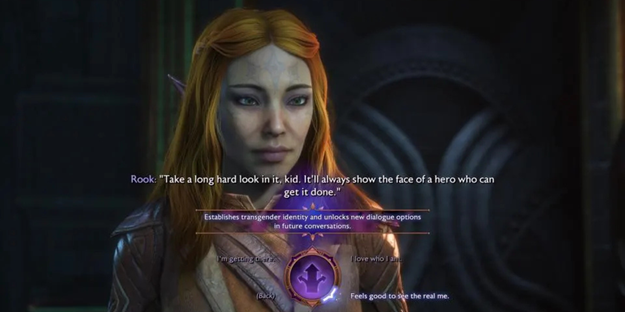

6. Poké Ball Mechanics – Gotta Catch and Patent ‘Em All

Patent Owner: Nintendo & The Pokémon Company

Patent Expires: 2041

Pokémon’s capture and storage system using Poké Balls is one of gaming’s most iconic mechanics. Despite existing for decades, it wasn’t patented until 2021, likely to prevent competitors from replicating the concept.

This patent is already making waves, as Nintendo sued Palworld’s developers for their creature-catching mechanics, even though the game differs significantly from Pokémon.

7. Active Time Battle – A New Spin on Turn-Based Combat

Patent Owner: Square Enix

Patent Expired: 2012

First introduced in Final Fantasy IV, the Active Time Battle (ATB) system changed RPG combat by replacing rigid turn orders with dynamic timers. It made battles more engaging, forcing players to think on their feet.

After Square Enix’s patent expired in 2012, developers have had more freedom to experiment with similar real-time combat mechanics.

8. Guitar-Controlled Gameplay – Rocksmith’s Innovation

Patent Owner: Ubisoft

Patent Expires: 2029

Unlike games like Guitar Hero, which used plastic controllers, Rocksmith let players plug in a real guitar to play along. Ubisoft patented the technology behind real-guitar gameplay, ensuring that no one else could create a direct competitor.

This patent has kept other developers from expanding on the concept, limiting innovation in the music gaming space.

9. Mouse-Controlled Flight – A New Way to Dogfight

Patent Owner: Gaijin Entertainment

Patent Expires: 2033

War Thunder’s developers patented a mouse-controlled airplane system, allowing smoother flight controls compared to traditional keyboard-based setups. While other flight sims have similar mechanics, Gaijin’s specific implementation remains exclusive.

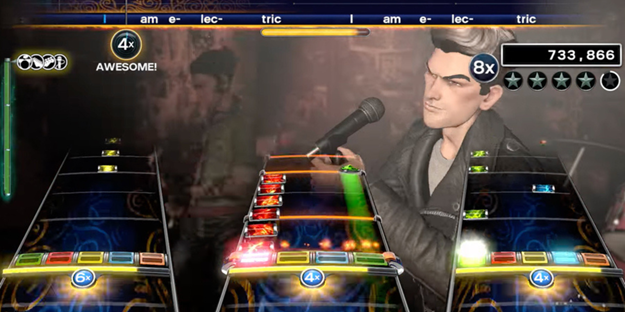

10. Simulating a Rock Band – Battle of the Music Games

Patent Owner: Harmonix

Patent Expires: 2032

Rock Band revolutionized rhythm games by introducing full-band gameplay, complete with drums, vocals, and multiple instruments. Harmonix patented several key elements, protecting their game from direct competition.

Ironically, these patents helped Harmonix win a legal battle against Konami, which tried to claim Rock Band had infringed on its own patents.

Final Thoughts: Are These Patents Protecting or Limiting Gaming?

Some patents, like EA’s ping system, ensure fair use and accessibility, while others, like the Nemesis System, arguably stifle innovation by keeping great ideas locked away.

As more patents expire, developers gain creative freedom, but as long as companies continue to patent innovative mechanics, we’ll keep seeing legal battles over who owns what in gaming.

At Intellect Partners, we help innovators protect, manage, and maximize their intellectual property. From patent prosecution and infringement analysis to claim charts and IP strategy, our expert team ensures your ideas stay secure. Whether you’re a startup or a Fortune 500 company, we’ve got you covered. Contact us today!