INTRODUCTION

With each successive generation of communication technology, telecommunication’s primary focus undergoes a transformation. The 2G and 3G epochs were primarily centered on human-to-human communication through voice and text. The advent of 4G marked a pivotal shift toward the extensive consumption of data, while the 5G era prioritized connecting the Internet of Things (IoT) and industrial automation systems.

In the forthcoming 6G era, intelligent computation will drive efficiency and improved human experience. While there is still ongoing innovation in 5G, with the introduction of 5G-Advanced standards, companies have already embarked on research for 6G, with plans to make it commercially available by 2030.

CHARACTERISTICS FOR 6G TECHNOLOGY

According to Nokia Bell Labs, six technology areas are expected to characterize 6G networks. These areas move the industry from faster connectivity alone toward intelligent, secure, sensor-rich and highly automated communication systems.

Artificial intelligence and machine learning – AI/ML techniques, especially deep learning, have rapidly advanced over the last decade and have already been deployed across domains involving image classification and computer vision, ranging from social networks to security. 5G will unleash the true potential of these technologies; with 5G-Advanced, AI/ML will be introduced into many parts of the network, across multiple layers and functions. From beam-forming optimization in the radio layer to scheduling at the cell site with self-optimizing networks, AI/ML can help achieve better performance at lower complexity.

Spectrum bands – Spectrum is a crucial element in providing radio connectivity. Every new mobile generation requires new pioneer spectrum to fully exploit the benefits of a new technology. Refarming existing mobile communication spectrum from legacy technology to the new generation will also become essential. New pioneer spectrum blocks for 6G are expected to include mid-bands of 7-20 GHz for urban outdoor cells enabling higher capacity through extreme MIMO, low bands of 460-694 MHz for extreme coverage, and sub-THz bands for peak data rates exceeding 100 Gbps.

A network that can sense – One of the most notable aspects of 6G would be its ability to sense the environment, people and objects. The network becomes a source of situational information, gathering signals that bounce off objects and determining type, shape, relative location, velocity and perhaps even material properties. This sensing mode can help create a mirror or digital twin of the physical world in combination with other sensing modalities, extending our senses to every point the network touches. Combining this information with AI/ML will provide new insights from the physical world and make the network more cognitive.

Extreme connectivity – The Ultra-Reliable Low-Latency Communication (URLLC) service that began with 5G will be refined and improved in 6G to address extreme connectivity requirements, including sub-millisecond latency. Network reliability could be amplified through simultaneous transmission, multiple wireless hops, device-to-device connections and AI/ML. Enhanced mobility combined with lower latency and improved reliability will support real-time video communications, holographic experiences and digital twin models updated in real time through the deployment of video sensors.

New network architectures – 5G is the first system designed to operate in enterprise and industrial environments, replacing wired connectivity. As demand and strain on the network increase, industries will require more advanced architectures that support greater flexibility and specialization. 5G is introducing service-based architecture in the core and cloud-native deployments that will be extended to parts of the RAN, with networks deployed in heterogeneous cloud environments involving private, public and hybrid clouds. As the core becomes more distributed and higher layers of the RAN become more centralized, there will be opportunities to reduce cost by converging functions. New network and service orchestration solutions exploiting AI/ML advances will result in an unprecedented level of network automation and lower operating costs.

Security and trust – Networks of all types are increasingly becoming targets of cyber-attacks. The dynamic nature of these threats makes sturdy security mechanisms imperative. 6G networks will be designed to protect against threats such as jamming. Privacy issues will also need to be considered when new mixed-reality worlds combine digital representations of real and virtual objects.

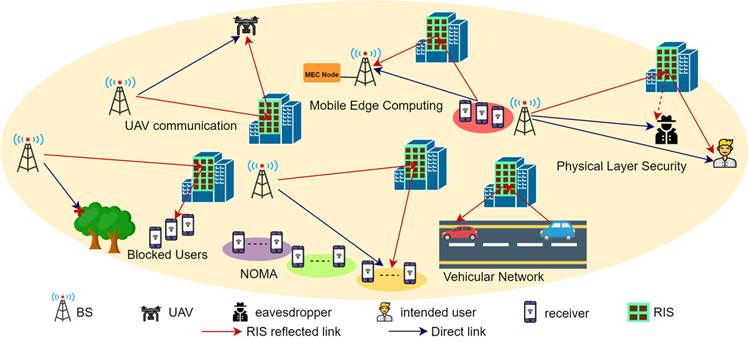

RECONFIGURABLE INTELLIGENT SURFACES (RIS)

A Reconfigurable Intelligent Surface (RIS) is a flat panel with small passive elements, approximately in the range of 1 cm2, each capable of independently adjusting the phase and potentially the amplitude of incident electromagnetic waves. Through precise control of these elements, reradiated waves can be directed toward specific directions with the help of an RIS controller. This enables alternative links within a cell and facilitates communication in non-line-of-sight scenarios, supporting extreme connectivity, AI/ML-based signal augmentation, innovative network architecture and optimized bandwidth utilization.

RIS can be fashioned as self-configuring elements within wireless network infrastructure, fine-tuning electromagnetic attributes in response to shifting traffic demands and propagation characteristics. RIS is conceptually appealing and offers practical implementation advantages because it does not require energy-hungry radio-frequency (RF) chains. The absence of RF chains makes RIS an energy-efficient and cost-effective solution compared with massive MIMO technology, which requires an RF chain for each antenna element and therefore increases hardware cost, complexity and power consumption.

Because RIS is highly passive and requires minimal power for operation, it can be an eco-friendly and cost-effective solution deployable on surfaces such as walls, ceilings, billboards and other infrastructure. However, RIS design still requires careful consideration of coverage range, surface size and the number of elements needed.

Source: IET Communications RIS article, as shown in the source image.

PATENT ACTIVITY AND COMPETITIVE LANDSCAPE

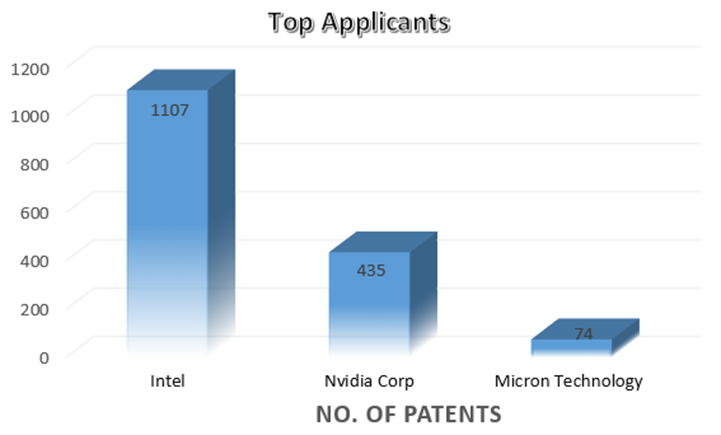

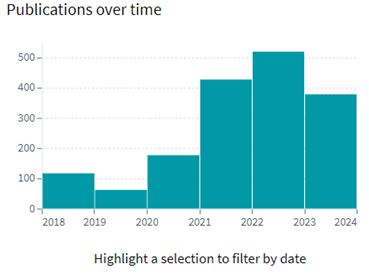

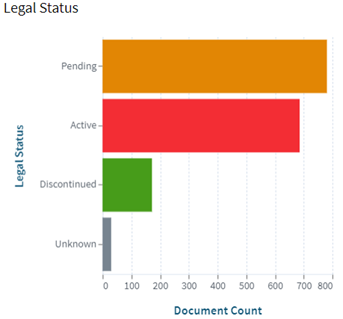

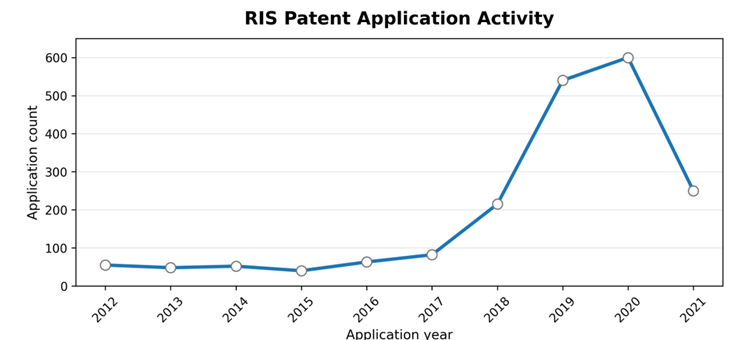

RIS technology is gaining traction among researchers in 5G-Advanced and 6G. After the standardization of 5G in 2019, patenting activity in RIS technology accelerated because RIS promises gains in spectral and energy efficiency without the expense of massive cell densification, while also unlocking numerous future telecommunication use cases.

Source note: Patent analysis using Orbit Intelligence; values reconstructed from the provided screenshot.

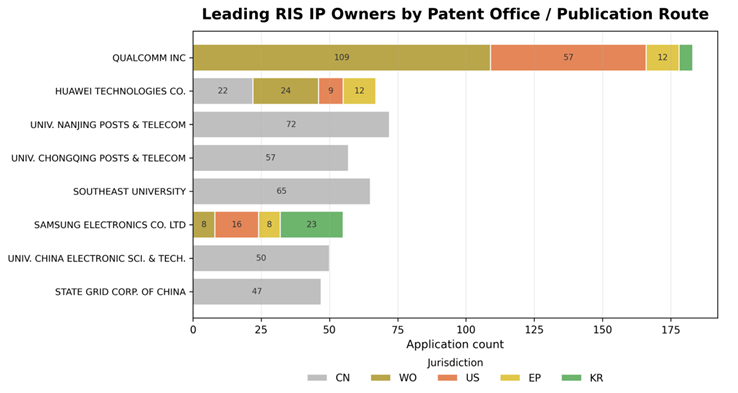

The patent landscape view indicates that the top owners of IP related to RIS technology include Qualcomm, Huawei and Samsung. Several Chinese universities are also actively researching in this area, and China constitutes a substantial share of the global RIS patent landscape.

Source note: Patent analysis using Orbit Intelligence; data reconstructed from the provided screenshot.

CONCLUSION

6G is expected to extend mobile networks beyond connectivity by embedding intelligence, sensing, automation, security and extreme performance into the network fabric. RIS is highly aligned with this direction: by shaping the wireless propagation environment itself, RIS can create alternative links, improve non-line-of-sight coverage, reduce energy consumption and support new architectures for dense, intelligent and adaptive wireless systems.

As patenting activity and research investment increase, RIS is likely to remain a key enabling technology in the transition from 5G-Advanced toward commercial 6G systems.

REFERENCES

- https://www.nokia.com/bell-labs/research/6g-networks/6g-technologies/

- https://ietresearch.onlinelibrary.wiley.com/doi/full/10.1049/cmu2.12364

- https://www.researchgate.net/publication/382323850_Reconfigurable_intelligent_surface_RIS-assisted_wireless_communications_with_a_virtual_reality_VR_case_study

- https://www.mdpi.com/2076-3417/13/21/11750

- https://arxiv.org/html/2312.16874v2

- https://www.rohde-schwarz.com/uk/solutions/wireless-communications-testing/wireless-standards/6g/reconfigurable-intelligent-surfaces-ris/reconfigurable-intelligent-surfaces-ris_257043.html

- https://www.youtube.com/watch?v=OIN7Dyu7N0A